Healthcare IT Today Podcast: 4 Opportunities to Ease the Tension Between Payers & Providers

When it comes to providers and payers, there’s no avoiding the tension that exists between the two. Ultimately, one’s revenue is the other’s costs. There’s also the fact that providers and payers are optimizing for different things. Providers want to ensure patients get the best care. Individual clinicians are incentivized to provide more care at the individual level to serve the patient and avoid malpractice suits; at the institutional level, more procedures mean more revenue, typically. Payers, on the other hand, face their own competitive dynamics as they sell to employers and individuals who want low premiums above all else.

You might be surprised to learn that despite the inevitably of this tension, there also lies plenty of opportunity in the space between providers and payers. In a recent episode on Healthcare IT Today Interviews, Steve Rowe, Healthcare Industry Lead at 3Pillar, and host John Lynn discuss why this tension exists and what can be done about it. We’ve captured the four biggest opportunities below.

1. RCM and Claims

The first opportunity is around Revenue Cycle Management (RCM) and claims. Payers have all sorts of different rules around what they will approve and what they’ll deny to balance the tension between keeping premiums low and paying for medical coverage. These rules are sometimes even group-specific.

The challenge? Providers don’t know what those rules are, which creates difficulties for the member. It’s not easy to understand at the moment what will be approved and what will be denied. That means patients may end up unhappy when a proposed treatment is denied or not paid in full (and they are balanced billed).

The opportunity here is for payers to expose that logic to health systems—essentially preadjucating payment (instead of doing it after the fact). The business rationale: make it easy for in-network providers to get paid in exchange for more competitive rates. Some companies are already doing this: “Glen Tullman is doing it with Transcarent; he’s essentially trying to intermediate the payer to create a new network. His whole premise to providers is, ‘Join our network because we will pay you the same day you do service,’” notes Steve. “That’s how he’s building his network with the top healthsystem and doctors.”

2. RCM Complexity

In Steve’s experience building an RCM startup and working with a regional Urgent Care chain, he observed that the expertise and institutional knowledge around claims processing was largely in the heads of the medical billing coders.

There are two main forms of complexity in RCM he highlights:

- Submitting the correct eligibility information (e.g. specific formatting of member ID numbers)

- Matching the right diagnostic codes to the appropriate CPT codes, which can be a large and complex matrix.

The risk here is that this institutional knowledge will be lost when these experienced medical billers retire. The processes are very manual, with reimbursements not keeping up with labor inflation. 3Pillar is leveraging AI and data mining to reverse engineer each payer’s algorithm for approvals and denials. The goal is to systematize this knowledge and flag issues proactively, rather than relying on the institutional knowledge of the billing staff.

The vision is to integrate this RCM intelligence engine with clinical documentation tools. That way providers are alerted in real-time during the care planning process about treatments or codes that are likely to be denied by the payer. This will improve the financial experience for providers and patients alike.

3. The Need for Data Transformation

There is a significant opportunity for data transformation as regional payers have data that lives in separate systems that don’t talk to each other. The pipes to connect these systems haven’t been built and the data isn’t defined in the same way. Regional payers are often at a technological disadvantage compared to national payers because they still have on-premise servers and haven’t moved to the cloud. The IT departments for these payers are swamped putting out fires. They simply don’t have the resources to take on the work associated with major technology modernization projects.

And here’s the rub: Self-insured employers want highly customized insurance products and plans that require flexible and configurable technology platforms. National payers have invested in modern tech stacks that can support this level of customization. However, regional payers struggle to match this same capability.

So, there’s a real need for regional payers to create a unified data platform and operating system that can integrate data from various systems (e.g., claims, population health, PBM, etc.). This would result in a simplified member experience while enabling seamless workflows for call center representatives, who often have to navigate multiple disparate systems. This is an area where working with a partner who specializes in this capability would be beneficial.

4. Real-Time Answers to Member Questions

Speaking of member experience, it’s now the number one concern of Vice Presidents of Benefits at self-insured employers thanks to a tight labor market. Top-tier benefits are necessary to attract and retain talent. There’s no doubt that there’s plenty of room to improve. The experience is often fragmented and frustrating as members struggle to get accurate information about coverage, costs, and provider networks.

There’s an opportunity for payers to make their medical policies and coverage algorithms more transparent and accessible to members at the point of care. Steve explains, “I’m excited about this opportunity because we’ve all been there where it’s like, ‘I just want to know if this particular provider for urgent care who is still open at 10 p.m. is in network. I can’t figure that out on the app. There’s not a search function and the call line doesn’t open until 8 a.m. tomorrow.”

What if patients could get real-time answers to their questions? 3Pillar is making that vision a reality through chatbots powered by AI and knowledge graphs. By using AI to combine data from disparate systems, members can get accurate, up-to-date information at any time, from anywhere.

These chatbots could also help to address the challenge of call center representatives needing to navigate multiple systems to piece together an answer for a member. Steve points out one key consideration: ensuring the chatbots are fed accurate data and avoiding hallucinations. Doing so requires careful design and integration with the underlying data sources.

While none of these opportunities have “easy buttons” to press, they all provide means for payers to differentiate themselves and better serve patients and providers. You can discover even more areas for payers and providers to win in the full podcast episode.

Recent posts

You Can’t AI Your Way Out of an Architecture Problem

The most consequential architectural decision most technology leaders make isn’t a decision at all. It’s a deferral — and it’s compounding silently underneath every AI and modernization investment they’re making simultaneously.

Architectural investment has long been classified as a technical cost: something you fund to manage debt, improve maintainability, or prepare systems for scale. An engineering concern, governed on engineering terms, deprioritized when business priorities compete for the same budget. For most of the last two decades, that classification was defensible. The debt accumulated slowly. The consequences were manageable. The constraint on delivery was human capacity, and architecture was a background condition that rarely determined outcomes directly.

That logic no longer holds. Architecture now determines the return on two of the most significant technology investments an organization can make — modernization and AI-enabled delivery — and most leadership teams are still evaluating it by rules that belong to a different era.

What AI reveals that was always true

The arrival of AI in the software delivery lifecycle hasn’t created the architectural problem. It has made it impossible to ignore.

AI amplifies the existing properties of the system it operates within. In environments where structure is clear, dependencies are explicit, and change boundaries are well-defined, gains compound across the delivery lifecycle. In environments where those properties are absent — where systems have grown opaque, where dependencies are hidden, where architectural intent has drifted from implementation reality — AI produces fragmented gains that never accumulate into delivery performance. The tools work. The system fails to give them what they need to work well.

This is why so many organizations are experiencing the same pattern: AI adoption is increasing, productivity metrics are improving, and overall delivery performance is moving only marginally. They’re tracking the wrong indicators — adoption rates, sprint velocity, feature throughput — none of which reveal the degree to which architectural opacity is capping the return on every one of those investments simultaneously.

Why the classification error persists — and why it’s now a strategic risk

AI has compressed the timeline on architectural debt in two ways. First, it has made the cost of opacity immediate rather than gradual — every AI capability you introduce runs into the ceiling faster than a human team would. Second, it has raised the economic stakes. The difference between an organization that can scale AI-enabled delivery and one that cannot is no longer a marginal productivity gap. It is becoming a structural difference in delivery economics — in how much engineering capacity you can generate, how fast you can release, and how quickly you can absorb and act on the output of machine-speed execution.

In that environment, treating architectural investment as a deferrable cost is not a conservative choice. It is an increasingly expensive one. The organizations that correct this classification earliest — that begin treating architectural clarity as a strategic capability rather than a technical liability — are the ones that will compound the advantages of every subsequent investment in AI delivery, modernization, and platform evolution.

Those that don’t will keep hitting the same ceiling with every new initiative. The tools will change. The constraint will not.

What the foundation actually requires

Establishing architectural clarity in a live enterprise environment is not a documentation exercise. Systems change faster than the knowledge used to describe them. What’s required is not a snapshot of how the system was designed — it’s a living, reliable model of how it actually behaves: how components interact, where dependencies run, where business logic lives, and where the boundaries of safe change actually sit.

That kind of understanding — what we call System Truth — is the foundation that makes both modernization and AI-enabled delivery scalable. It gives modernization teams the precision to determine impact before changes are made rather than discovering consequences in production. It gives AI agents the structural context they need to reason safely at the autonomy levels that produce real delivery gains.

Practices like software archeology address this directly — analyzing live systems to uncover hidden dependencies, reconstruct architectural relationships, and generate the machine-readable system context that makes confident change possible. It is a single investment that unlocks both — and the organizations that establish it are in a fundamentally different position for every initiative that follows.

The question isn’t whether your architecture needs attention. It does. The question is whether your organization has recognized that the cost of deferring it is no longer just a technical debt problem — it is a ceiling on what you can build, how fast you can move, and how much return you will see from every investment sitting on top of it.

Architecture was always the inhibitor. AI has simply made that undeniable.

Recent posts

Getting to System Truth with Software Archeology

Successful modernization depends on System Truth—a reliable, system-level understanding of how code, data, and business logic actually interact. In practice, that means understanding how the system behaves as it runs—not just how it is designed on paper. It is the difference between believing a change will work and knowing in advance how it will behave once it is introduced.

That level of understanding is difficult to sustain. Systems evolve continuously—code changes, data flows shift, and logic accumulates—so any static view quickly drifts out of date. What was once considered safe becomes uncertain as dependencies shift and behavior changes. The issue is not a lack of information; it is the inability to maintain a coherent, current view of execution as the system evolves.

What System Truth requires

Understanding how a system behaves in execution requires a higher standard—one that goes beyond static views or partial insights. That standard cannot be met through any one view of the system.

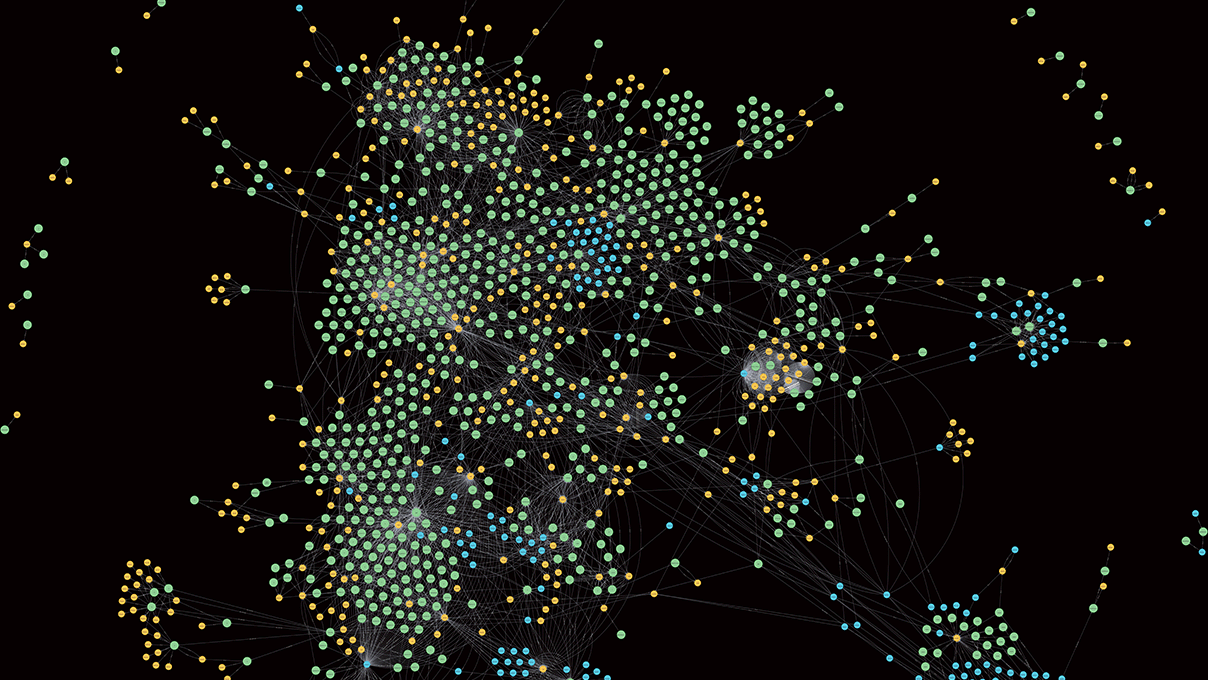

System Truth is not a single artifact or perspective. It is an execution-level understanding that emerges from three interdependent layers: structure, state, and intent.

Structure — what exists. The system’s composition—services, classes, APIs, schemas, and infrastructure—defines boundaries and relationships. This layer is typically well mapped by existing tooling.

State — how data moves. The flow and transformation of information across components introduces time and variability—how data is created, modified, and propagated. It explains how information travels through the system, but not why outcomes occur.

Intent — how decisions are made. The business logic embedded across the system is rarely centralized. It is distributed across services, conditionals, and transformations—often shaped by years of incremental change—and determines which paths execute and why.

Individually, each layer provides only a partial view—one that, when used in isolation, introduces systematic risk into decision-making. Together, they explain how the system behaves, enabling decisions grounded in how the system actually operates rather than assumptions.

Software archeology: Reconstructing behavior

Software Archeology makes system behavior explicit by reconstructing how structure, state, and intent interact in execution. It traces how logic propagates across services, how data influences decisions, and where dependencies form across boundaries.

This is a fundamentally different approach from traditional methods:

- Documentation captures intended design—but drifts from reality

- Static analysis enumerates structure—but does not explain behavior

- Observability shows runtime activity—but not full decision pathways

Software Archeology connects these fragments into a coherent model of execution. This shifts teams from interpreting systems to working from evidence.

In practice, it surfaces relationships that static views miss: cross-service dependencies, logic conditioned by events elsewhere, and execution paths that persist long after their original purpose has faded.

Consider a pricing function that appears isolated. Structure and lineage suggest clear boundaries and inputs. But when execution is reconstructed, a legacy authentication rule alters pricing via a shared transformation—an invisible dependency until it breaks in production.

From behavior to execution confidence

Execution confidence—the ability to predict the impact of change before it is introduced—comes from a behavioral model of the system that captures how decisions propagate, how data shapes execution, and how outcomes are produced under real conditions.

This changes how modernization is executed:

- Impact is determined rather than approximated

- Dependencies are identified before changes are made

- Testing is focused on the pathways that actually drive outcomes

Instead of over-testing, teams target what matters. Instead of discovering issues in production, they surface them in advance. Instead of coordinating around conflicting interpretations, they operate from a shared, evidence-based model.

Execution becomes predictable—not because systems are simpler, but because their behavior is understood.

Enabling precision in modernization

This is not an incremental improvement in how teams work—it is a structural shift in how uncertainty is managed. Without System Truth, teams are forced to manage uncertainty through process: more coordination, more validation, more buffering against unknowns.

Without System Truth, teams are forced to manage uncertainty through process: more coordination, more validation, more buffering against unknowns.

With System Truth, that burden moves out of process and into the system itself. Understanding is embedded, and dependencies are visible. This is what enables precision—not just faster execution, but more controlled, predictable outcomes.

Software Archeology makes this level of precision possible by turning system behavior into something that can be understood, queried, and acted on.

Beyond the foundation

Software Archeology is the foundation—but it’s one part of a larger approach to modernization that replaces assumption with evidence at every stage. Our whitepaper, Intelligent Modernization: Adding Clarity, Velocity, and Verifiable Value to Legacy Transformation, details how leading technology organizations establish System Truth, sequence change with confidence, and accelerate delivery without absorbing unnecessary risk. If your teams are navigating complex legacy systems, this is the playbook.

Recent posts

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering

The AI-Enabled SDLC: A 5-Level Maturity Model for Responsible Engineering