How to Create an Augmented Reality App

Augmented Reality (AR) is an innovative technology that lets you present an enhanced view of the real world around you. A good example would be displaying match scores embedded alongside the live football action from the ground. The embedding is done via an augmented reality application which renders a live camera image and augments a virtual object on top of the real world image and displays on your camera preview screen using vision-based recognition algorithms.

In this blog, we will explain the development process of a sample AR app using Qualcomm Vuforia SDK, one of the best SDKs available to build vision-based AR apps for mobile devices and tablets. Currently it’s available for Android, iOS and Unity. The SDK takes care of the lowest level intricacies, which enable the developers to focus entirely on the application.

Conceptual Overview

We will begin with an overview of key concepts used in the AR space. It’s important to understand these before starting an AR app development.

- Targets – They are real world objects or markers (like barcodes or symbols). The targets are created using Vuforia Target Manager. Once created they can be used to track an object and display content over it. The targets can be image targets, cylindrical targets, user-defined targets or word targets, 2D image frames (frame markers), virtual buttons (that can trigger an event) or Cloud targets.

- Content – By content we mean a 2D/3D object or video which we want to augment on top of a target. For example displaying an image or video on top of the target.

- Textures – A texture is an OpenGL object formed by one or two images having same image format. The Vuforia AR system converts content into texture and AR system renders the texture to display on camera preview screen.

- Java Native Interface – In Vuforia Android samples, the lifecycles events are handled in Java but tracking events and rendering are written in native C++. JNI can be used to communicate between Java and C++ as it enables native method calls from Java.

- Occlusion – It means tracking a virtual object hidden by real object. Occlusion management handling involves establishing a relationship between virtual and real objects by using transparent shaders and hence displaying graphics as if they are inside a real world object.

- OpenGL ES – Vuforia uses OpenGL ES internally for rendering of 2D and 3D graphics using a graphical processor unit. The OpenGL ES is specifically designed for smartphones and embedded devices.

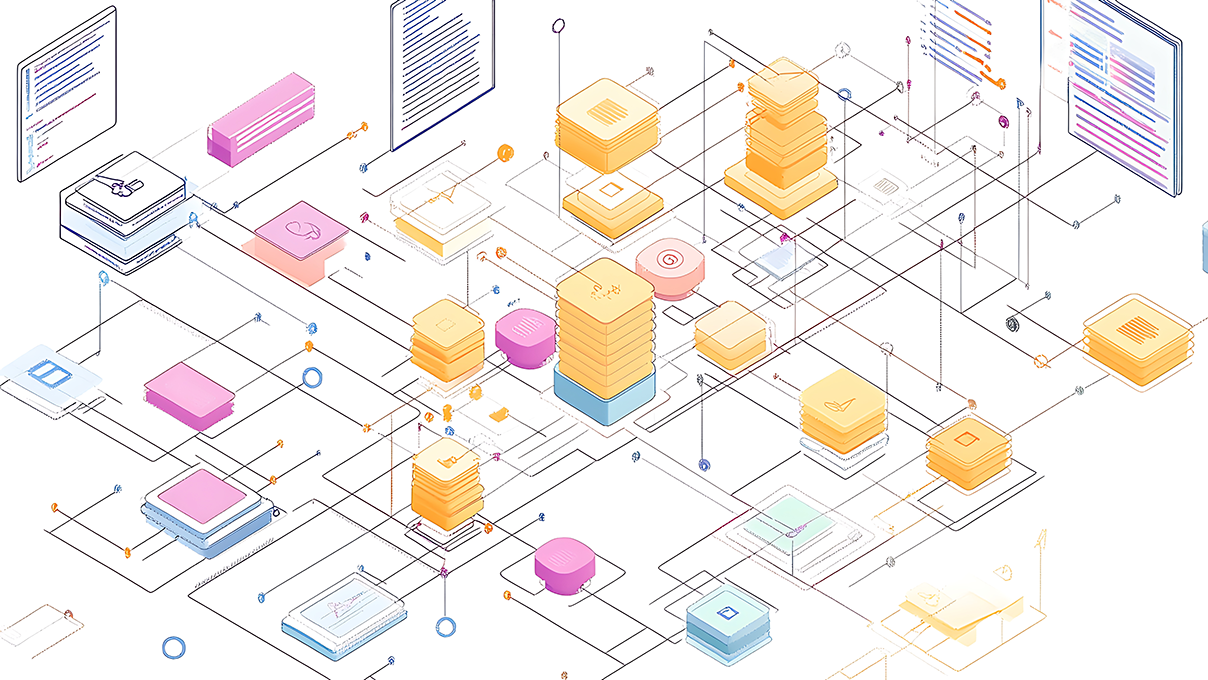

Vuforia Components

The above diagram shows the core components of Vuforia AR application.

- Camera – Captures the preview frame and passes it to the Image Tracker.

- Image Converter – The pixel format converter converts the default format of the camera to a format suitable for OpenGL ES rendering and tracking.

- Tracker – The tracker detects and tracks real world objects in camera preview frame using image recognition algorithms. The results are stored in state object which is passed to the video background renderer.

- Video Background Renderer – The renderer renders the camera image stored in state object and augments it on to the camera screen.

- Device Databases – The device database or dataset contains targets created using Target Manager. It contains an XML configuration file and binary .dat file.

- Cloud Databases – Can be created using target manager and can contain a database of up to 1 million targets, which can be queried at run-time.

Beginning Development

TargetManager – The first step is to create targets using Vuforia Target Manager to use as device database. For this, you first need to do free registration on Vuforia developer portal. This account can be used to download resources from Vuforia and post issues on Vuforia forums. After login,

- Open TargetManager on Vuforia website as shown above and open Device Databases tab.

- Click Create Database, Add Target. A new target dialog opens.

- Select the image you want to show as the target from your local system. Only JPG or PNG images (RGB or grayscale only) less than 2MB in size are supported. An ideal image target should be rich in detail and must have good contrast and no repetitive patterns.

- Select target type as single image. Enter an arbitrary value as the width and click create target. After short processing time, selected target will be created.

- If the target rating is more than three then the target can be used for tracking. A higher rating indicates image is easily tracked by AR system.

- Add more targets in the same way. Select the targets you want to download and click download selected targets.

- The download will contain a <database_name>.xml and <database_name>.dat file. The .xml file contains the database of target images whereas .dat file is a binary file contains information about trackable database.

Installation and Downloading Sample Code

- Assuming you are already logged in to your Vuforia account, go ahead and download Vuforia SDK for Android and Samples for Android

- Extract the Vuforia SDK folder and put it in same folder where your have kept your Android-SDK-folder. Copy samples inside empty sample directory in Vuforia SDK folder and import VideoPlayBack sample.

- In Eclipse, Go to Window-Preferences and navigate to Java-Build Path – Class Variables and create a new variable.

- Add QCAR_SDK_ROOT in Name field and select you Vuforia SDK folder by clicking folder option.

- Check in your project Java Build Path, vuforia.jar is exported under Order and Exports tab.

- Open copyVuforiaFiles.xml and under <fileset> give path for armeabi-v7a. You can check the path in build directory of your Vuforia-SDK folder. In my case in Linux the path is : “/home/vineet/vineet/vuforia-sdk-android-2-8-8/build/lib/armeabi-v7a”

- The previous step will generate libVuforia.so file in your project libs directory when you build and run the app.

- The demo app already contains 2 markers and video files which you can use to test. You can print the markers from PDF files in the media folder of your app.

- After running the app, the camera view screen will open. On pointing the camera at the marker, a video will appear on the screen overlaid on the marker.

Developer Work Flow

When a user launches the sample app, it starts creating and loading textures by calling loadTextures(). Here the bitmaps from assets folder are converted into Textures required by Vuforia SDK engine for rendering of 3D objects on AR view.

Simultaneously the app will start the process for configuring engine by calling initAR(). Here the app will asynchronously initialize the Vuforia SDK engine. After successful initialization, the app starts loading trackers data asynchronously. It will load and activate the datasets from target XML file created using TargetManager.

After engine configuration, the app calls initApplicationAR() where it will create and initialize OpenGLES view and attaches a renderer to it, which is required to display video on top of camera screen if imagetarget is found. The renderer attached with OpenGL ES view requests loading of videos through VideoPlaybackHelper and calls OpenGL DrawFrame() method to track camera frames. During this process the OpenGL textures from textures objects loaded in the beginning and textures for video data are also created.

The app then calls startAR() to start the camera. As soon the camera starts the renderer start tracking frames. When imageTarget is found the app displays the video. The video can be configured to play automatically or can be start on tap.

All the resources are unloaded by app by calling onDestroy() when user closes the app.

Conclusion

With this blog, we have now seen how we can use Vuforia to track ImageTargets and display video on top of it. Vuforia can be used for lot of purposes like text detection, occlusion management, run time target generation from cloud. To know more about Vuforia check developer.vuforia.com.

Recent blog posts

Stay in Touch

Keep your competitive edge – subscribe to our newsletter for updates on emerging software engineering, data and AI, and cloud technology trends.