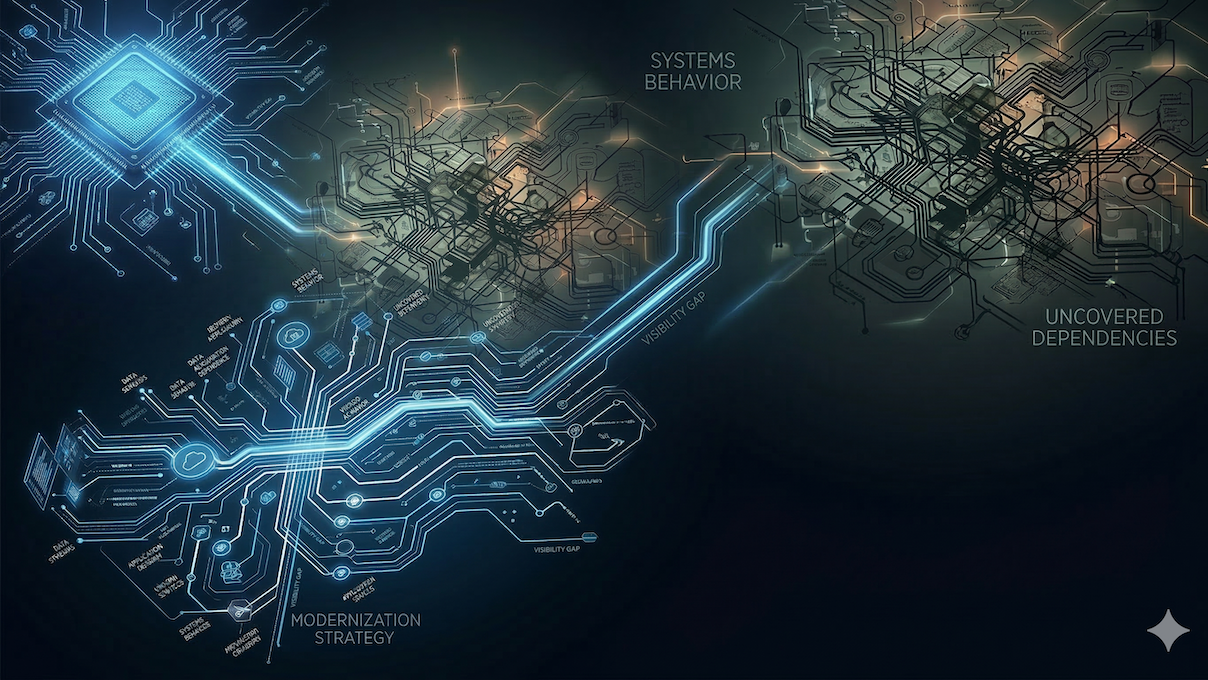

Modernization Decisions Built on Conflicting Answers

In complex enterprise environments, even basic questions about how systems behave do not have a single, reliable answer—and that becomes a critical constraint when organizations attempt to modernize.

For example: what will this change impact?

Ask that question across architecture, engineering, data, and operations, and you will get different answers. Architecture points to known integrations based on design intent. Engineering identifies additional paths in the code that will also be affected. Data teams trace downstream dependencies through pipelines and derived datasets. DevOps surfaces impacts that only emerge under real runtime conditions (e.g., load, failover, retries).

This is not a lack of information. Different parts of the organization produce different answers to the same question—and those answers do not reconcile into a single, consistent explanation of how the system behaves.

Why this matters for modernization

Under steady-state conditions, where changes are small and localized, this is manageable. Most work stays within a single domain, and teams can make decisions based on what they can see without needing to understand how the entire system behaves.

Modernization removes that constraint. It forces a single decision from competing answers: which services will break, which data pipelines will be affected, and which behaviors will change once the system is modified.

When the same question yields different answers, decisions cannot be verified—they are negotiated. Progress slows as teams reconcile perspectives, testing expands to compensate for uncertainty, and accountability diffuses because no answer is authoritative. The impact is measurable: longer timelines, higher costs, and engineering capacity spent on rework and defensive validation.

Modernization breaks here—not in execution, but in the inability to produce a single, reliable decision before change is made.

Why visibility breaks down in practice

Organizations attempt to improve visibility through better documentation, updated diagrams, and more advanced analysis tools. Each of these approaches assumes that the problem is incomplete or outdated information.

Documentation captures intended design. Diagrams reflect architectural relationships. Analysis tools expose structure in code or data. But these layers do not reconcile. The architecture view diverges from runtime behavior. Code does not fully capture data dependencies. Runtime signals do not explain design intent.

As a result, visibility improves within domains, but inconsistency persists across them. The organization can see more—but still cannot form a single, coherent answer to how the system actually behaves.

System Truth as an operating requirement

Better visibility alone does not solve this problem. A unified model does.

We call this System Truth—a single, continuously reconciled representation of how the system actually behaves across code, data, and runtime, providing the full context needed to evaluate change with confidence. It resolves all perspectives into one verifiable answer.

With System Truth, questions like what will this change impact? produce a single, consistent answer before change is made. Decisions can be verified, not inferred. Without it, organizations are forced to choose between competing answers and learn the truth only after execution—where cost and risk are highest.

System Truth changes how modernization operates. It replaces assumption with verification and allows teams to understand impact before change is made—reducing risk, increasing speed, and enabling controlled execution.

From inconsistent answers to System Truth

The visibility challenge is not just a matter of incomplete information. It stems from the absence of a unified system model. Until organizations can produce a single, reliable answer to how their systems behave, modernization will continue to depend on trial, error, and post hoc validation.