Agentic Testing: Moving Quality From Checkpoint to Control Layer

Agentic testing raises the bar for quality leadership. As AI agents enter test planning, scenario generation, script creation, execution, failure analysis, and self-healing, QA leaders are no longer governing only test assets, automation suites, and defect workflows. They are governing an AI-assisted decision system that directly affects release confidence.

That changes the standard.

Faster testing is not a stronger quality. More generated scenarios do not automatically mean better coverage. A passing test suite does not automatically prove release readiness. A healed script is not automatically an improvement. Without clear standards for validation, traceability, and accountability, agentic testing can create the most dangerous form of delivery risk: confidence without evidence.

The mandate is clear: agentic testing must strengthen the quality signal, not simply increase the volume of testing activity. QA leaders must define what inputs agents can trust, what outputs require review, when remediation can be applied, when failures must escalate, and what evidence is required before a release decision changes.

At 3Pillar, we are exploring this shift through a multi-agent testing framework that connects quality workflows more directly into software delivery. Its significance is not that it automates more QA work. It shows how quality engineering can become a more adaptive, auditable, and accountable control layer as AI changes the pace of software delivery.

Agentic testing changes what QA leaders govern

Most QA leaders already know AI can accelerate parts of the testing lifecycle. The more important question is where AI should be allowed to influence quality decisions. That is the leadership shift.

In an agentic model, QA leaders must govern not only what tests exist, but how they were generated, what assumptions shaped them, what risks they map to, how failures were interpreted, and whether automated remediation changed the meaning of the test.

The governance surface expands from test cases and execution results to the behavior of the AI-assisted quality system itself. Requirements quality, decision boundaries, output review, traceability, escalation, and auditability all become part of the quality operating model. These standards determine whether agentic testing builds confidence or simply accelerates activity.

If requirements are vague, environments are unstable, business rules are poorly understood, or release criteria are inconsistent, AI will not resolve that disorder. It will expose it faster. Without governance, it may amplify it.

The risk: Speed without evidence

The greatest risk in agentic testing is not that AI fails to produce enough output. It is that AI produces output that appears authoritative without creating real confidence. Self-healing makes that risk especially clear.

Some failures are caused by brittle selectors, environment instability, or legitimate UI changes that do not affect business behavior. In those cases, assisted healing can reduce maintenance burden and improve automation resilience. Other failures signal product regressions, changed requirements, broken workflows, or misalignment between expected and actual behavior. If those failures are automatically healed without review, the organization may lose an essential quality signal.

Self-healing should not be measured by how many tests an agent can repair. It should be measured by whether the integrity of the test remains intact. The same standard applies to coverage. Agentic testing can generate more scenarios faster than traditional manual planning, but volume is not coverage quality. A large set of generated tests may still miss the workflows that carry the highest customer, revenue, compliance, or operational risk.

Quality leaders must define what confidence means. Which business-critical workflows are covered? Which failure modes matter most? Which edge cases, negative paths, and integration points require explicit validation? Where is human review required? What gaps remain even after AI expands the test set?

Agentic testing only creates enterprise value when speed is matched by evidence.

How 3Pillar is applying the model

At 3Pillar, our multi-agent testing framework is designed to bring agentic quality workflows into real engineering environments.

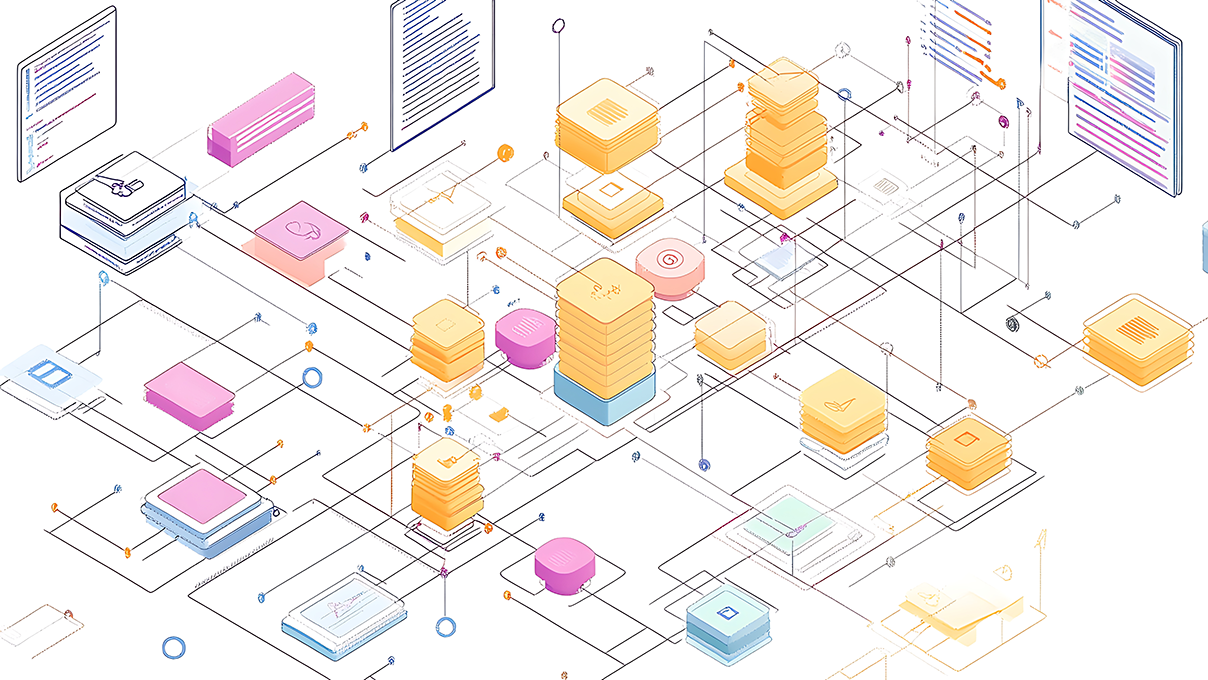

The framework includes three core agent roles:

- Planner Agent: analyzes user stories, business requirements, acceptance criteria, application URLs, preconditions, and expected behaviors to generate structured test plans

- Generator Agent: converts approved test plans into automation scripts using established engineering practices such as Page Object Model architecture, reusable fixtures, stable selectors, and modular test data

- Healer Agent: monitors test execution, identifies failures, supports recovery for certain classes of broken tests, and escalates unresolved failures for review

These agents are integrated with delivery tools including Jira, GitHub, Playwright, and CI/CD pipelines. The workflow can move from story analysis to test planning, script generation, execution, reporting, and delivery workflow updates with less manual intervention.

The framework is intentionally designed around more than automation speed. It reflects a broader shift toward quality workflows that are integrated, reviewable, and connected to delivery decisions. The goal is not a fully autonomous QA function. The goal is to reduce repetitive effort while preserving the oversight required to ensure generated tests, healed scripts, and reported outcomes remain trustworthy.

Speed only matters when the quality signal remains reliable.

What leaders must put in place

Agentic testing cannot scale on tooling alone. It requires a governance model strong enough to protect the quality signal.

Before scaling agentic testing, QA and engineering leaders must define:

- Input and coverage standards: What level of detail is required in user stories, acceptance criteria, expected behaviors, and business-risk mapping?

- Decision boundaries: Which agent actions can happen automatically, and which require human approval?

- Review and evidence thresholds: What must be reviewed before generated tests, healed scripts, or reported outcomes are trusted?

- Traceability and auditability: How are requirements, tests, failures, changes, and release decisions connected and inspected after the fact?

- Escalation and accountability: Which failures must be routed for review, and who owns the final release judgment when AI agents participate in quality workflows?

These controls are not adoption barriers. They are what make adoption scalable.

Without them, agentic testing creates more motion without more confidence. With them, it becomes a meaningful control layer — one that helps teams accelerate delivery while maintaining accountability for quality outcomes.

Turning speed into trust

Agentic testing marks an important shift in AI-enabled software delivery. It moves quality beyond a downstream checkpoint and toward a continuously operating control layer — one that helps teams manage speed, complexity, and risk as AI changes the pace of engineering work.

The organizations that win with agentic testing will not be the ones that automate the most activity. They will be the ones that build the strongest quality control layer: one where AI accelerates the work, humans govern the evidence, and release confidence becomes more transparent, auditable, and resilient.

At 3Pillar, we see multi-agent testing as one example of how AI-enabled delivery is becoming more operational, more integrated, and more accountable. For a deeper look at the technical framework behind this approach — including the agent architecture, workflow stages, integrations, and implementation considerations — read my detailed technical overview.

About the author