From Copilots to Agents: Where Most Organizations Stall

As organizations move beyond early AI adoption, the conversation is beginning to shift. The focus is no longer just on what copilots can accelerate, but on how AI should be applied beyond individual tasks—and what it takes to move from isolated assistance to more coordinated, system-level execution.

This next step—moving from copilots to agents—typically emerges as organizations move from early, AI-assisted workflows into more structured and scaled adoption. It is often framed as a tooling upgrade. In practice, it is something more fundamental. Teams don’t encounter friction here because AI falls short. They encounter it because the surrounding system hasn’t kept pace.

This is not simply a technology transition. It is a shift in how control is exercised across the delivery system.

When execution outpaces decision-making

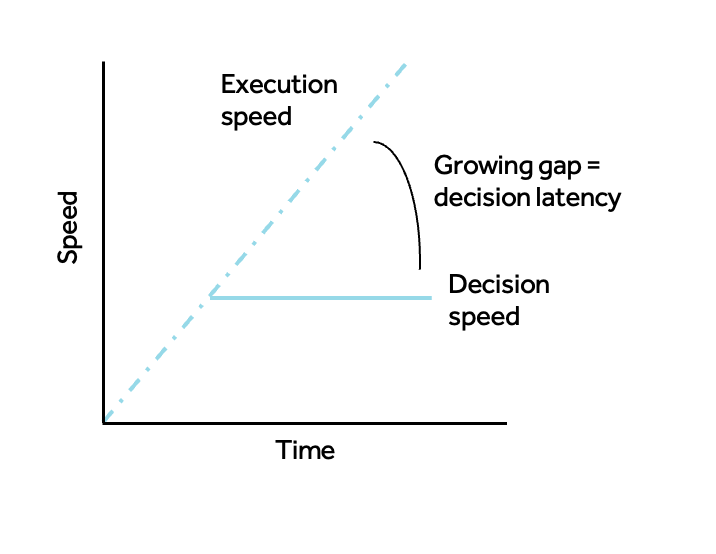

What actually changes at this stage is not tooling—it’s the relationship between execution speed and decision speed.

Copilots increase execution speed inside an unchanged decision model: humans still prioritize, review, and approve at a fixed cadence. Agents begin to compress execution into continuous flows. When that happens, the system is governed by how quickly it can decide, validate, and enforce constraints, not how quickly it can produce code.

This creates a new gap: execution can run ahead of decision-making.

The transition, then, is not about adding more capability. It is about designing a control plane for delivery—how intent is defined in clear, testable terms (e.g., acceptance criteria and specs), how constraints are encoded as guardrails (tests, policies, and checks in pipelines), and how decisions are applied continuously through automated validation rather than periodic human reviews. Human oversight shifts to defining intent, validating outcomes, and governing exceptions—not performing every step.

Whether organizations succeed or stall depends on how they approach this shift. Those that treat agents as a faster execution layer run into friction. Those that rethink how decisions are made and enforced begin to unlock sustained progress.

Where decision latency emerges

At this stage, the constraint is no longer flow—it is decision latency. In earlier stages of maturity—where teams are still standardizing AI use and improving flow—the challenge is moving work through the system. Here, the challenge is deciding on that work fast enough to keep pace with execution. Friction appears where decision-making cannot keep pace with execution.

Decisions at machine speed depend on two things: having the right signals to act on, and having the right points in the system where those decisions are applied.

- Decision signals (Context + Governance): Decisions about impact, safety, and release readiness depend on clear, machine-consumable signals. When test coverage is incomplete, dependencies are unclear, or policies aren’t encoded in pipelines, those decisions fall back to humans—introducing latency before changes can proceed.

- Decision checkpoints (Operating model): Decisions about code quality and readiness are deferred to PR reviews, sprint cycles, and release calendars. As output increases, these checkpoints queue up, shifting the bottleneck from execution to validation and creating stop/start delivery.

None of these are failures—they are indicators that the system’s decision model is still calibrated for a different operating speed, where decisions were expected to follow execution rather than keep pace with it.

Critically, these constraints are not resolved through better tools. They are inherent to the system itself. Expanding AI capability without evolving how control operates within that system increases activity—more PRs, more test runs, more changes—without improving the system’s ability to convert that activity into delivered outcomes.

What progress looks like in practice

Organizations that move forward make a small number of concrete changes that shift decision-making into the system.

- Define intent up front: Work begins with clear, testable acceptance criteria and specs that agents can execute against—reducing ambiguity later in the flow.

- Move validation into pipelines: Quality and safety checks shift from PR comments to automated tests, security scans, and policy checks enforced in CI/CD.

- Start with bounded workflows: Teams pilot agent-driven flows in specific use cases (e.g., test generation for a service, bug triage, or safe refactoring) before expanding scope.

- Shorten or remove manual gates: Release readiness and compliance checks become continuous signals rather than scheduled approvals.

- Redefine roles around control: Engineers spend more time validating outcomes and refining guardrails; product teams focus on precise intent and completeness of context.

The objective is not maximum automation. It is extending control—through embedded guardrails and human oversight concentrated on intent, validation, and exception handling—so autonomy can scale safely and consistently.

The leadership shift

The leadership question is no longer how to expand AI adoption or where AI can be applied, but how to design a delivery system where control can be distributed, enforced, and trusted at machine speed—and where decisions are made early enough to keep pace with execution.

In practice, this means identifying where validation is deferred, where intent is underspecified, and where control still depends on manual intervention—and then systematically moving those decisions earlier and into the system itself.

At 3Pillar, we work with engineering leaders to identify where this transition is already creating friction in their delivery systems—and to redesign how decisions are made, validated, and enforced so AI-driven execution can translate into real, measurable delivery outcomes.